Microsoft’s newly released Digital Defense Report – a comprehensive analysis that drills into cybersecurity for a wide range of business and tech considerations – delivers new insights into the threats users face from AI and identifies a range of AI-enhanced defenses they can activate.

The 2025 Digital Defense Report is developed on the strength of vast amounts of security data that Microsoft collects and analyzes. Here are three particularly indicative data points:

- 100 trillion security signals processed daily

- 15,000+ partners in its security ecosystem

- 5 billion emails screened daily for malware and phishing

The report delivers a comprehensive view into the current threat and defense landscapes, while making specific defensive recommendations to customers. The full report is recommended reading; in this analysis, I aim to pull out key AI-centric findings in both the threat and defense categories.

AI-Driven Threats

Microsoft points to a frequently cited conundrum when it comes to AI and cybersecurity: AI can – and is – being used to build and launch more sophisticated and scalable attacks while simultaneously increasing defenders’ ability to keep pace and operate at a scale comparable to that of the attackers.

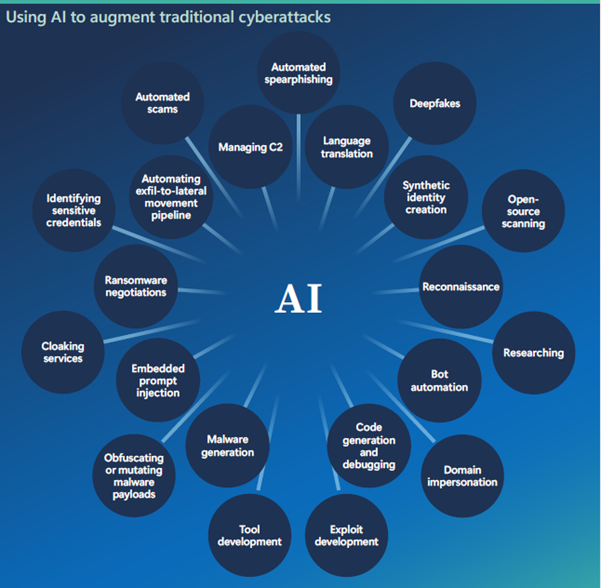

Broadly, Microsoft refers to attackers’ use of AI to expand their activities as “cyberattack augmentation” and lists nearly two dozen types of attacks where AI is being used to automate activities that were previously far more time consuming. See the chart below; my takeaway: the chart puts an exclamation point on the expansive opportunity AI presents to the bad guys.

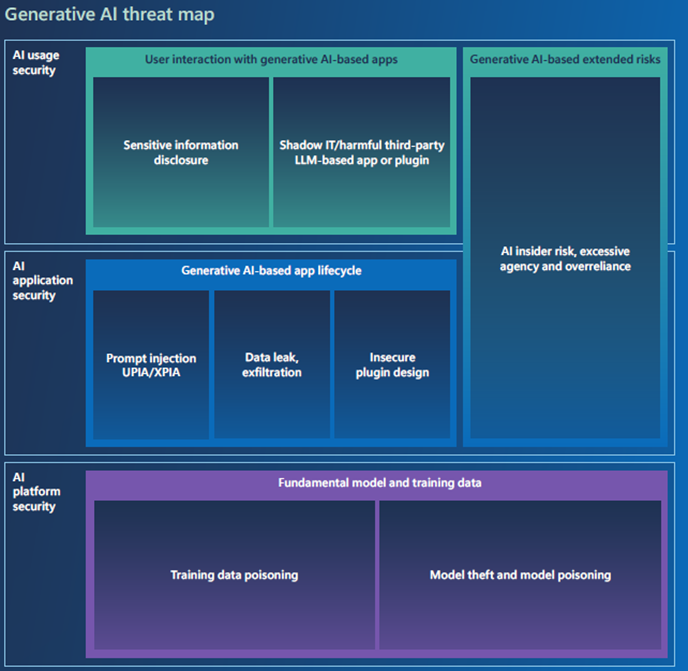

AI is not only increasing the speed and ambitions of attackers, it’s also creating a greater breadth of threats spanning AI usage, AI applications, and AI platforms, the report states. Challenges arising because of this breadth include sensitive data exposure, prompt injection, insecure plugin design, and foundational threats such as model theft and training data poisoning. See a more complete list in the report graphic below:

Against this backdrop, Microsoft’s report notes a series of risks that affect all AI users:

- Overreliance on AI, including managing the tech like a fully capable, independent decision maker, rather than the augmentation tool that it is. Attackers can exploit this weakness by feeding manipulated data or crafting deceptive scenarios to enter false information into systems or trigger operational disruptions.

- Information leakage, which can be exploited during runtime or from training data to expose sensitive organizational details. The report notes that AI systems managing customer interactions or proprietary data are prime attack targets for those looking to leak information.

- AI agent risks, which can arise if attackers inject biased data so an AI agent prioritizes advertisers or malicious actors over users. The antidote to these risks, Microsoft says, include transparency, explainability, and strong governance.

- Other risks raised in the report from AI: logging and monitoring of AI use that can expose sensitive data, nation-state abuse of AI in influence operations through tactics such as training data poisoning, and increased speed and sophistication of fraud and social engineering.

The foregoing make up a subset of the ways attackers can and will exploit AI to make their work faster, more scalable, more destructive, and more difficult to detect.

Three Defense Categories Boosted by AI

The Microsoft report cites a wide range of ways that AI can be used to defend against the new AI-powered attack perpetrators and methods. I’ll cover three in this analysis: AI-powered threat detection, protecting identity security, and using automation to combat cybercrime.

AI-enhanced threat detection is embodied in the Detection Engineering discipline that’s operating in strong cybersecurity organizations. It can be applied – and is being applied — within Microsoft to a range of detection tasks.

These detection tasks include threat analysis, which benefits from AI’s ability to quickly and effectively extract the most relevant information from vast quantities of data. AI models, Microsoft noted, are well suited to extract commonalities in threats, as well as kill chains.

AI can also identify detection gaps at scale in order to isolate areas that need more coverage. Such detection authoring solutions can leverage AI to create detections at different levels of sophistication, ranging from basic to advanced, behavioral detections. Finally, detection validation is a function well suited to AI agents that can be used to simulate complex, multi-stage attacks, enabling validation at scale in a red teaming approach.

When it comes to securing identity, Microsoft notes that “Modern AI-driven identity protection systems continuously analyze billions of sign-ins and user signals, learning what normal behavior looks like for each user and entity so they can spot the early signs of an attack.” The report goes on to identify several types of agents that will be instrumental to identity security:

- Policy enforcement agents that review configurations and policies

- Credential hygiene agents that monitor secrets and credentials

- Application risk detection agents that monitor app permissions and behaviors

- User lifecycle agents that automatically assign the correct permissions based on attributes

Microsoft’s Digital Crimes Unit (DCU) is actively using automation to combat the sophistication and speed of AI-powered cybercrime. For example, it’s developed AI to analyze “password spray” attacks to differentiate normal and targeted behavior. It’s also developed tools to block phishing campaigns that emanate from deceptive URLs. In so doing, the company says it’s shifting the cybercrime balance on the strength of AI and it’s providing a solid example of how automation can be used to combat malicious actors.

Concluding Thoughts

Leveraging the intelligence and scale of AI is essential in today’s threat environment. It’s needed for threat analytics, isolating detection gaps, validating detections, automating remediation, and much more.

An overall strategy is critical to serve as an organizing framework for these tactics. In fact, Microsoft advises that customers need a security framework to prep for AI adoption, discover AI usage taking place in their companies, protect sensitive data and AI models, all while governing AI applications, data, and operations.

In short, companies’ best hope of effectively fending off threats that are enabled by malicious use of AI is to deploy AI comprehensively to give them automation, speed, and scale that aren’t possible otherwise. Look for 2026 to be a pivotal year as organizations adopt AI technology and measures to protect their data and their operations.

More AI Security Insights:

AI Agent & Copilot Summit is an AI-first event to define opportunities, impact, and outcomes with Microsoft Copilot and agents. Building on its 2025 success, the 2026 event takes place March 17-19 in San Diego. Get more details.